Blog: Process & Challenges in Modern Preclinical Drug Discovery

23 April 2026Process & Challenges in Modern Preclinical Drug Discovery

Drug discovery identifies and characterizes molecules with the potential to safely modulate disease and progress into development. The process spans target identification/validation, hit discovery, lead optimization, and candidate selection before entering clinical phases. Time to market often exceeds a decade, failure rates approach 90%, and average R&D costs have risen from ~$800M to >$2.2B. Many failures originate in preclinical discovery from insufficient target validation, weak mechanistic data, emerging toxicity, or poor biological alignment. With much of the ‘low-hanging fruit’ already discovered, modern discovery increasingly depends on improving confidence at each stage through robust assay systems, deeper biological insight, predictive computational tools, and early safety testing.

At Excellerate Bioscience, we support preclinical drug discovery through target profiling, validation, tool generation, functional/phenotypic assays, and candidate profiling, with deep expertise in areas such as GPCR pharmacology, ligand binding kinetics and disease-relevant models across immunology, respiratory, and metabolic disease. We place strong emphasis on delivering with reliability, transparency, and translational relevance. Since many programs rely on success at the preclinical stage, we believe that this is where the greatest risk mitigation is achieved. This article reviews the challenges and innovations in modern drug discovery, highlights common failure points, and outlines practical approaches to improving preclinical success.

Key challenges in effective implementation and application of AI/ML tools in modern drug discovery. Taken from Jarallah et al., 2025.

Key challenges in effective implementation and application of AI/ML tools in modern drug discovery. Taken from Jarallah et al., 2025.

Addressing Key Challenges in Modern Preclinical Discovery: Opportunities, Limitations and Recommendations

The most well documented failure points in preclinical discovery consistently cluster around four areas:

i. Data quality and reproducibility

ii. Translational misalignment

iii. Incomplete early safety profiling

iv. Inefficient screening cascades

Advances in assay design, model systems, data integration, and computational tools (including AI/ML) are increasingly being applied to address these recurring challenges, offering new opportunities to strengthen decision-making in preclinical discovery. These approaches are now being more widely integrated across discovery pipelines to reduce manual bottlenecks and improve the consistency and robustness of biological data interpretation. Importantly, AI/ML represents one component within a broader set of experimental and analytical improvements, rather than a standalone solution. Here, we highlight key areas of opportunity for addressing today’s challenges in preclinical drug discovery, with emphasis on robust experimental design, biological relevance, and transparent data practices.

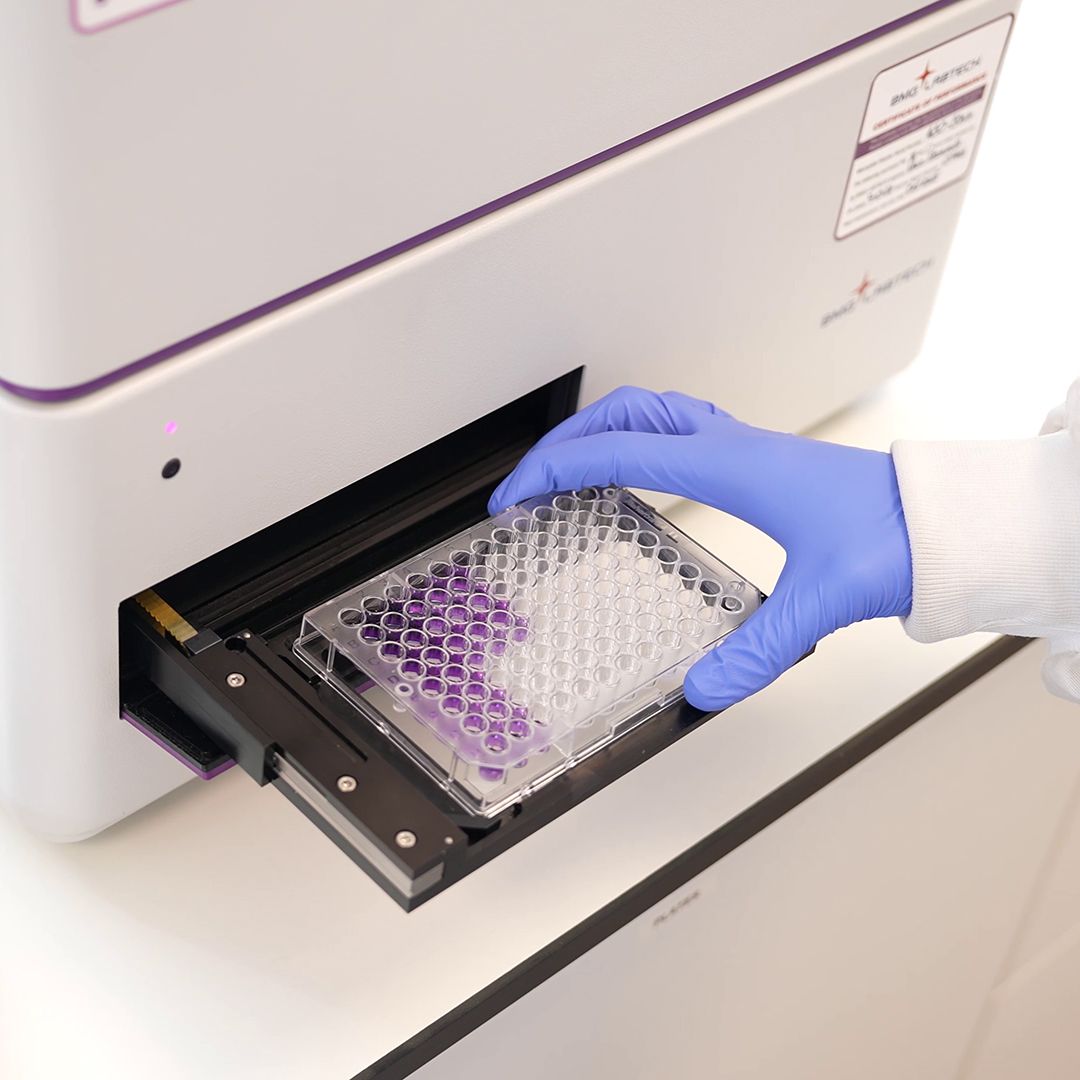

i. Data quality and reproducibility:

This remains a fundamental constraint in preclinical discovery, where variability in annotation and formatting, experimental design, and missing metadata limits confidence in downstream interpretation and model-driven insight. Data clarity remains more important than volume, particularly where computational models may amplify underlying dataset bias.

Analytical support: AI/ML can extract biological signals from complex datasets (e.g. imaging, “omics”, phenotypic profiling), improving interpretability in high-dimensional systems.

Core limitation: Outputs remain highly sensitive to input quality; poorly annotated datasets (“garbage in, garbage out”) can reinforce bias and reduce reliability.

Enabling practices: Standardised metadata, consistent assay QC criteria (Z′, S:B, controls), reagent traceability, and structured data capture improve reproducibility, supported by stronger governance around transparency and data integrity.

Integrated workflows: Hybrid experimental/computational approaches, including iterative refinement and incorporation of negative data, improve robustness over time. Cultural acceptance of failure further strengthens model training, assay design, and decision confidence.

ii. Translational misalignment:

Translational failure to human biology remains a persistent limitation in preclinical discovery, where promising in vitro or early in vivo results do not reliably predict clinical efficacy.

Translational relevance: Incorporating human biology (cell-type variation, phenotypic diversity, donor variability) into experimental design/model training improves predictive alignment with clinical outcomes.

Core limitation: Simplified or non-human systems can generate high-confidence data with limited translational value, regardless of computational tools available.

Enabling practices: Increased use of physiologically relevant systems (e.g. disease-relevant primary cells, co-culture models, and donor-derived panels) improves biological context and clinical relevance not captured in simplified assay systems.

Integrated workflows: Combining diverse human-relevant datasets with computational analysis (e.g. automated phenotypic interpretation, AI/ML prioritisation) improves decision-making when biological variability is preserved rather than averaged out.

iii. Incomplete early safety profiles:

Safety liabilities remain a major source of late-stage attrition, with off-target effects often emerging only after significant investment in lead optimisation. Earlier assessments reduce downstream risk.

Predictive safety: Integration of computational tools with early safety/ADME profiling supports earlier identification of off-target liabilities.

Core limitation: Predictive models are constrained by incomplete or biased toxicology datasets, limiting extrapolation beyond well-characterised chemical space (e.g. PROTACs challenging Lipinski’s Rule of 5 assumptions).

Enabling practices: Early safety panels (cytotoxicity, mitochondrial, metabolic assays) anchor predictions experimentally, while broader toxicology and chemical databases improve coverage and reduce bias.

Integrated workflows: Combining in silico prioritisation with experimentally validated safety readouts improves confidence and enables earlier termination of high-risk compounds.

iv. False positives, false negatives, and inefficient cascades:

Inefficient cascades continue to drive attrition through assay artefacts, limited mechanistic resolution, and poorly defined progression criteria. Orthogonal confirmation and early mechanism-of-action studies improve progression decisions.

Complex pattern recognition / advanced analysis: ML applied to high-content imaging, kinetic, and phenotypic datasets helps distinguish true biological signal from artefact, improving hit prioritisation. Complementary mechanistic and biophysical measures (e.g. binding kinetics, mechanism of action, residence time) add resolution beyond potency alone, strengthening SAR differentiation.

Core limitation: Computational ranking alone can introduce false confidence when datasets are narrow or biased to single assay formats.

Enabling practices: Orthogonal assay design and mechanistic confirmation reduce artefactual progression and improve hit-to-lead robustness.

Integrated workflows: Iterative feedback between experimental validation and computational prioritisation sharpens decision thresholds, improving discrimination between true and artefactual activity and reducing progression of low-confidence series.

Final Thoughts & Key Takeaways

Modern drug discovery is being reshaped by biology, chemistry, and computation working together. The greatest opportunities lie not in incremental improvements, but in changing how we ask questions and validate findings. AI/ML tools can accelerate insight and reduce costs, but only when built on reliable, translational data.

At Excellerate Bioscience we help discovery teams reduce risk and accelerate decision-making by delivering high-quality preclinical evidence across target validation, screening cascades, mechanistic studies, and candidate profiling, with data integrity as a central focus. We believe the application of rigorous assay design, strong model relevance, early liability screening and measured use of AI/ML tools provide a solid foundation in the evolving discovery ecosystem.

Key Takeaways:

- Preclinical stages remain a major source of downstream failure; robust early data matter.

- Differentiation comes from transparency, reproducibility, and human relevance.

- AI/ML tools amplify, but do not replace, rigorous experimental design.

- Negative data are more valuable than ever, strengthening confidence across experimental and AI/ML computational workflows.

For teams seeking to strengthen discovery programs, explore our Excellerate Drug Discovery Capability pages.

References:

Artificial intelligence revolution in drug discovery: A paradigm shift in pharmaceutical innovation, 2025.

The AI drug revolution needs a revolution, 2025.